2023 marked year 15 for Endurica. If I had to pick one word to describe the past year, that word would be “vector”. Because magnitude and direction. 😊

We updated our core value statement this year. The first one I ever wrote as part of Endurica’s original business plan listed 3 values: technical leadership, customer focus, and trustworthiness. Those values served us well for many years and in many ways shaped who we have become. But it was important this year to take stock again. We’ve grown 8-fold since I wrote those down! So our team spent many hours revisiting our shared values and deliberating over which will best define our culture and steer us right going forward. In the end, we decided to keep the first 3, and we added 3 more: embrace the grit, make an impact, and better every day.

We also completed an exercise to articulate what makes Endurica truly unique in the CAE / durability simulation space. The 3 words we chose are… Accurate, Complete, and Scalable.

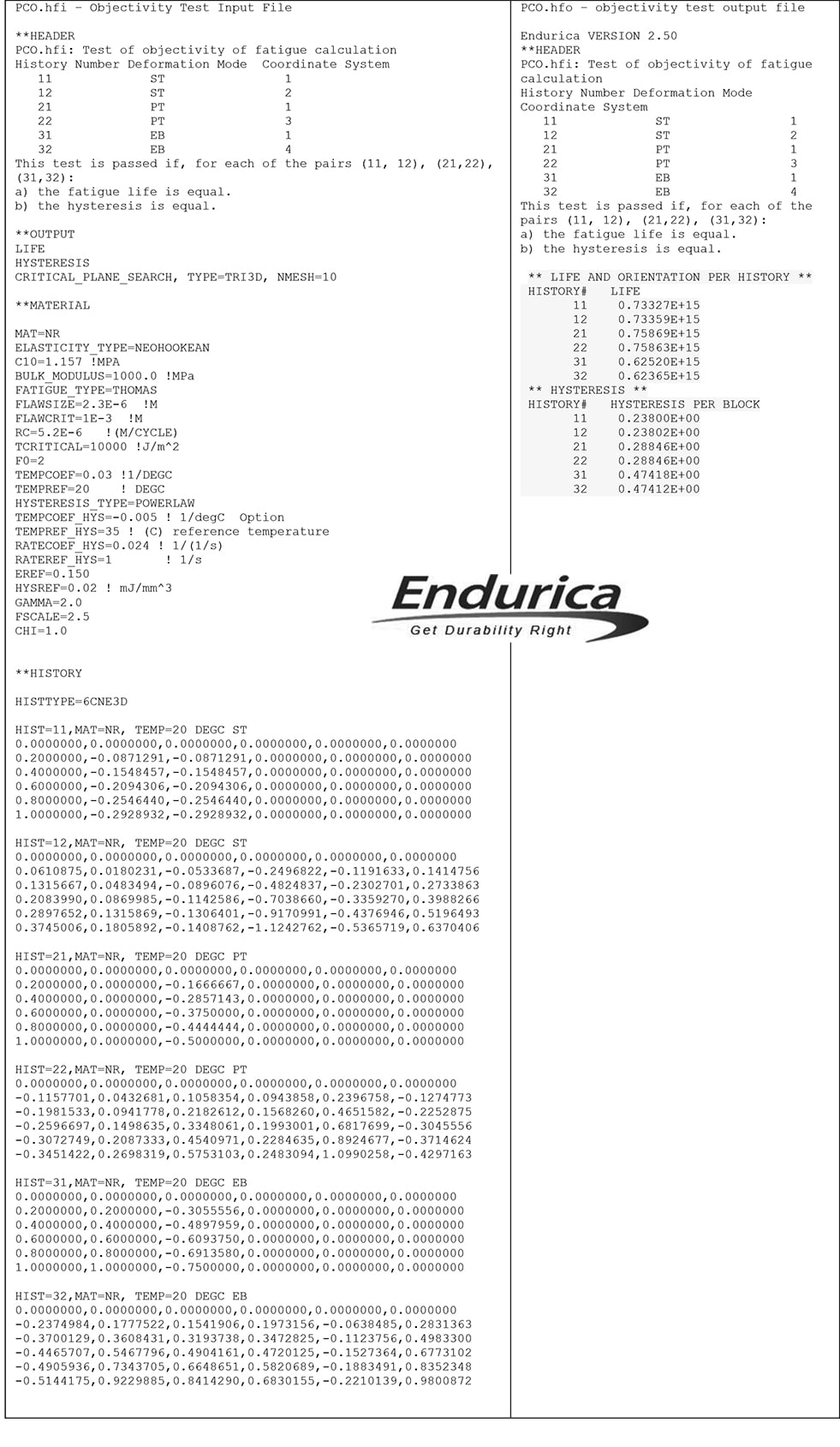

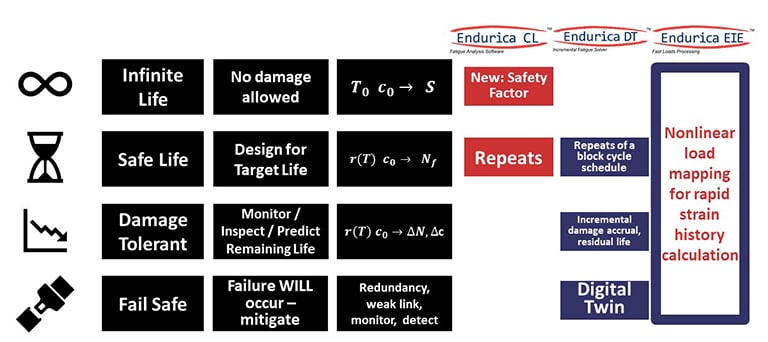

- Accurate refers to the accurate material models that capture rubber’s many “special effects”, the accurate critical plane analysis method for analyzing multiaxial history, the accurate handling of nonlinear relationships between global input load channels and local crack experiences, and the extensive set of validation cases that have demonstrated our accuracy over the years. Nobody offers a more accurate solution for rubber durability.

- Complete refers to our complete coverage of infinite life, safe life and damage tolerant approaches to testing and simulation. It refers to feature completeness that enables users to account for nearly any material behavior under nearly any service conditions. Finally, it refers to the documentation, the materials database, and the examples we distribute with the software and with our webinar series. Nobody offers a more complete solution for rubber durability.

- Scalable refers to our capacity to apply our solutions efficiently in all circumstances. Scalability is the training we provide so that users can learn our tools quickly. Scalability is access to powerful, ready-to-use workflows right when you need them. Scalability is the modular approach we take to material testing and modeling so that simple problems can be solved cheaply and complex problems can be solved accurately in the same framework. Scalability is our multi-threading that allows job execution time to be accelerated to complete impactful analysis on tough deadlines. Nobody offers a more scalable solution for rubber durability.

2023 was not all navel-gazing and new marketing. We also had magnitude and direction in other areas.

Top 10 Code Developments:

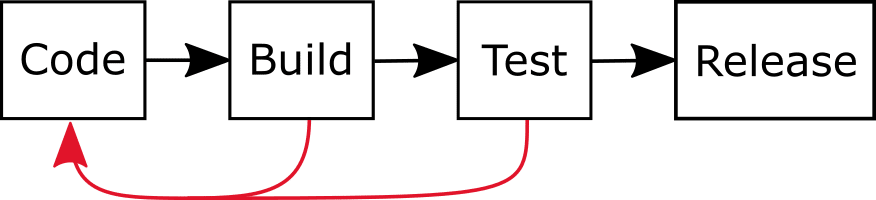

- New Endurica Architecture: After several years of development and a soft launch under the Katana project name, we finally completed our migration to the new architecture. The new architecture provides a huge speed advantage for single thread and now for multithread execution. It uses a new input file format (.json). The json format makes it easier than ever for users to build customized and automated workflows via Python scripting.

- Sequence Effects: Sometimes the order of events matters to durability, and sometimes it doesn’t. We introduced Steps and Blocks to our input file, giving users complete control over the specification of multi-block, multi-step scheduling of load cases. There is also a new output request that came out of this work: residual strength.

- EIE: 6 channels and support for RPC: Support for 6 channels of load input was one of our most highly requested new features. Fast growing use of this feature led to further enhancements of the workflow (support for rpc file format, studies of map building techniques), and new recommendations on how to implement boundary conditions for specified rotation histories in explicit and implicit finite element models.

- Queuing: Design optimization studies need efficient management and execution of multiple jobs. Endurica’s software license manager now supports queueing for licenses. Queuing allows a submitted job to automatically wait to start until a license is available, instead of the prior behavior of exiting with a license error. Now you can submit many jobs without worrying about license availability.

- Haigh Diagram Improvements: We implemented an improved discretization of the Haigh diagram, and parallelized its evaluation. Now you get much nicer looking results in a fraction of the time. For details, check out our blog post on Haigh diagrams and also read about other improvements like axis limit setting and smoother contour plots.

- Viewer image copy: There is now a button! Its easier than ever to get your images into reports.

- Documentation Updates: We have been focusing on improving documentation this year. There are many new sections in the theory manual and user guide, as well as a getting started guide and more examples. Stay tuned for many more examples coming in 2024!

- User Defined Planes: It is now possible to define your own set of planes for the critical plane search. One example where you might want to do this would be the situation where you would like to refine the critical plane search on a limited domain of the life sphere.

- New Database Materials: We added 7 new carbon black and silica filled EPDM compounds to the database. We are now up to 42 unique rubber compounds in the database.

- Uhyper Support: The new architecture now supports user-defined hyperelasticity. If you have a Uhyper subroutine for your finite element analysis, you can use it directly with Endurica.

Testing Hardware

We completed the acquisition and installation at ACE labs of a Coesfeld Instrumented Cut and Chip Analyser (ICCA). The ICCA provides unmatched measurement and control of impact conditions, and provides a way to evaluate rubber compounds for their resistance to cutting and chipping.

Applications, Case Studies, Webinars

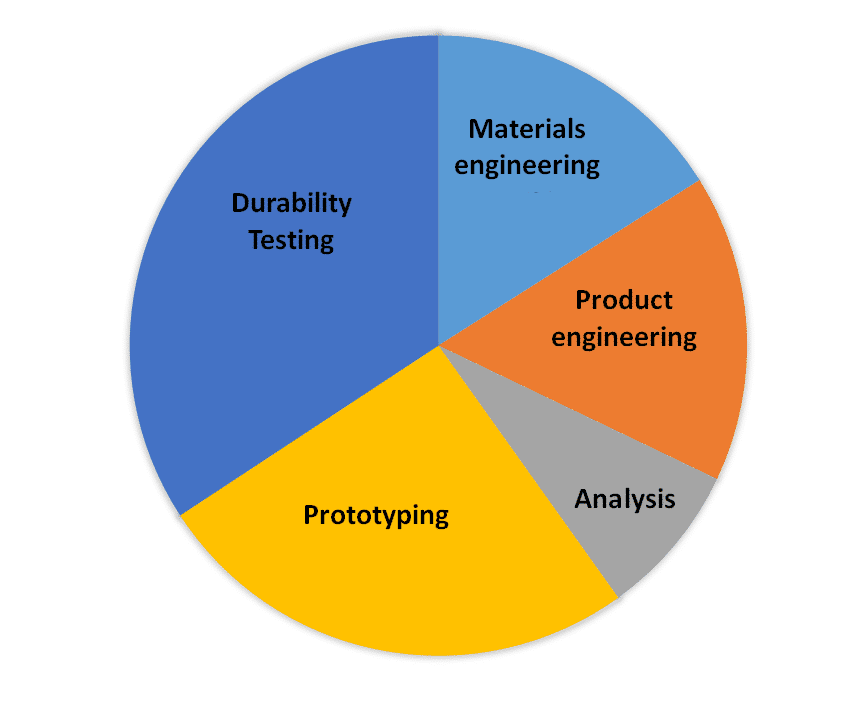

Never underestimate the students! We were blown away by the work of undergraduates at the University of Calgary with our tools and Ansys. The students designed an airless tire, completing durability simulations using Endurica software within the scope of a senior design project. They were able to Get Durability Right on a short timeline and a student budget. Check out their multi-objective, high-performance design project here.

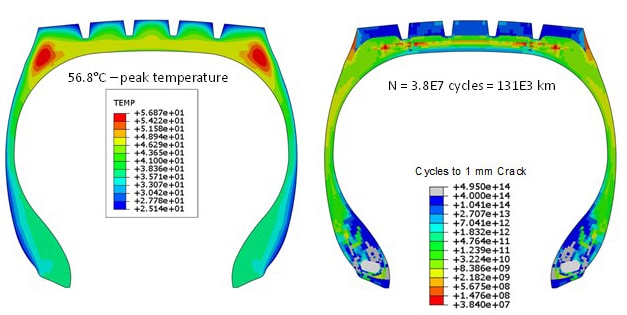

Analyzing what happens to tires as they take on the most celebrated testing track in the world might have been the funnest project Endurica’s engineers tackled in 2023. We presented the technical details at The Tire Society annual meeting and more in a followup webinar. An extensive Q&A session followed, and I loved the final question: “So, how long before we have a dashboard display of ‘miles to tire failure’ in our cars?” Bring it. We are ready!

Our Winning on Durability webinar series hit a nerve with the Metal Fatigue DOES NOT EQUAL Rubber Fatigue episodes on mean strain (the tendency of larger mean strains to significantly INCREASE the fatigue life of some rubbers!) and linear superposition (for converting applied load inputs to corresponding stress/strain responses). The great response has lead to our third installment on the differences between rubber and metal fatigue with an upcoming presentation on temperature effects.

These days everybody’s talking about whether to meet in person or online. There are great tools available for online meetings, and these have helped us navigate Covid-19. Like everyone else, Endurica teammates regularly use online meeting technology. But if there is one thing we learned over the last year, it is that sometimes physical presence really matters. Living and working in isolation is just not healthy in the long run.

These days everybody’s talking about whether to meet in person or online. There are great tools available for online meetings, and these have helped us navigate Covid-19. Like everyone else, Endurica teammates regularly use online meeting technology. But if there is one thing we learned over the last year, it is that sometimes physical presence really matters. Living and working in isolation is just not healthy in the long run.

Q: With regards to fatigue life prediction methods, where was the rubber industry 10 years ago?

Q: With regards to fatigue life prediction methods, where was the rubber industry 10 years ago?